Traditional SEO metrics such as rankings, clicks, and impressions are increasingly losing relevance in the context of AI search. When answers are generated directly by systems like ChatGPT, Google AI Overviews, or Perplexity, the logic of visibility fundamentally changes:

The key question today is no longer “Where do I rank?” but “Am I part of the answer?”

To measure this new reality, adapted KPIs are required. Below are the most important KPIs that companies should track regularly:

The Core Success Metrics in AI Search

1. Mentions

How often does your brand appear in AI-generated answers—regardless of whether a source is provided?

→ Indicator of basic presence in the AI ecosystem

Why is this important?

Mentions are the first and lowest-threshold indicator of whether a brand appears at all within an LLM’s “relevant set.” Without mentions, there is no visibility—no matter how good your content is.

Example:

A user asks: “What are the best PDF tools?”

The answer includes: “Adobe Acrobat, Smallpdf, and PDF24 are common solutions…”

→ Adobe receives a mention, even without a link.

What this KPI does well:

Measures basic AI presence

Shows whether a brand exists within the AI’s decision space

Early indicator of successful GEO optimization

What this KPI cannot do:

No insight into quality or sentiment (positive/negative)

No indication of influence on decisions

No differentiation between prominent and incidental mentions

Conclusion:

Mentions are a necessary but not sufficient KPI. They show visibility, but not its actual impact. However, they help identify content and topic gaps that can be expanded to increase AI presence.

2. Citations

How often is your content actively used as a source in AI-generated answers?

→ Indicates whether content is considered a trustworthy foundation

Why is this important?

Citations are the strongest quality indicator in AI search. They show not only that a brand is “known,” but that its content is actively used to answer questions.

Example:

A user asks: “How does a PDF editor work?”

The answer includes: “According to Adobe, users can edit PDFs with Acrobat…”

→ Adobe is used as a source.

Important:

A distinction is made between implicit citations (as in the example) and explicit citations with clickable links.

What this KPI does well:

Measures trust and authority

Strong indicator of content quality

Shows actual usage of content; explicit citations can also generate website traffic

What this KPI cannot do:

Can be disproportionately influenced by a few strong pieces of content

Does not capture full visibility of all mentions

No direct insight into brand perception

Conclusion:

Citations show whether content is not just visible, but relevant and trustworthy. This creates clear levers: content can be optimized to be cited more often, and gaps can be closed to build authority in key topics.

3. Sentiment

How positively or negatively is your brand portrayed in AI-generated answers?

→ Critical for perception and brand impact

Why is this important?

AI systems reflect existing narratives. The sentiment KPI shows what kind of image of a brand is embedded in the model and gives insight into what users perceive.

Example:

“Adobe Acrobat is powerful but expensive…”

→ Mixed sentiment: negative perception of price, positive perception of quality.

What this KPI does well:

Measures brand perception

Early indicator of reputation risks

Steering metric for content strategy

What this KPI cannot do:

Subjective and fluctuating

Prompt-dependent

No direct business impact

Conclusion:

Sentiment shows how a brand is evaluated by AI—not just whether it is found. This enables targeted actions: addressing negative narratives and improving perception through strategic content and branding efforts.

4. Visibility Score / Share of Voice

How visible is your brand compared to competitors across multiple prompts?

→ Should be evaluated over time due to fluctuations

Why is this important?

The visibility score aggregates mentions across a defined prompt set and makes trends measurable. It shows your relative position in the AI landscape.

Important note:

Highly dependent on the selected prompt set (topics, phrasing)

Individual measurements fluctuate → trends over time are key

What this KPI does well:

Enables comparison over time (trend analysis)

Benchmarking against competitors

Measures success of GEO initiatives

What this KPI cannot do:

No insight into quality (sentiment) or trust (citations)

Can be biased by prompt selection

No direct insight into traffic or business impact

Conclusion:

The visibility score shows relative market position—but only within a well-defined prompt set.

Approach:

Define a stable, representative prompt set (use cases & intents)

Track mentions per prompt regularly

Weight prompts into an index (e.g., share of mentions)

Compare with competitors and focus on trends rather than single data points

5. Competitive Benchmarking

Which brands are mentioned alongside yours, and who dominates specific topics?

→ Shows real competition within AI answers

Why is this important?

AI search is a competition for answer space. Competitive benchmarking reveals who currently owns the narrative in specific topics.

Example:

A user asks: “What are the best PDF tools?”

The answer includes brands like Adobe, Smallpdf, PDF24.

→ These brands form the actual competitive landscape in AI.

What this KPI does well:

Identifies new (often unexpected) competitors

Reveals topic ownership and gaps

Provides a foundation for strategic positioning

What this KPI cannot do:

No insight into brand perception (sentiment)

No direct link to traffic or conversions

Dependent on the prompt set

Conclusion:

Competitive benchmarking shows who you are truly competing with in AI—not just in traditional markets. This enables targeted differentiation and the identification of underutilized topics for quick visibility gains.

6. Agentic Traffic

Are AI systems and agents accessing your content?

→ A completely new form of usage

Why is this important?

Agentic traffic is generated by autonomous AI agents that analyze data, make decisions, and perform actions (e.g., research, comparison, even purchase preparation)—often without a direct human click. Unlike mentions, which reflect visibility, agentic traffic shows active usage of content.

Key considerations:

Growth: Increasing rapidly with tools like ChatGPT (browsing/agents), Perplexity, and others

Agentic commerce: Agents may pre-select products or initiate steps in the buying process

Different behavior: Faster, more targeted, often API-driven → new requirements for tracking and infrastructure

Implication: Visibility shifts partly into a “machine layer” beyond traditional clicks

Example:

An AI agent aggregates information on “best PDF tools,” retrieves content from adobe.com, and uses it to build a structured comparison.

→ Content is actively processed without a direct user click.

What this KPI does well:

Shows whether content is actively accessed by AI systems

Early indicator of relevance in agentic workflows

Complements traditional traffic metrics

What this KPI cannot do:

Difficult to clearly distinguish (user vs. bot/agent)

No direct insight into human interaction

No immediate business impact

Conclusion:

Agentic traffic shows whether your content exists in the “machine layer.” This opens new levers: optimizing APIs, structured data, and crawlability for AI accessibility.

7. Referred Traffic

Is traffic coming directly from AI systems?

→ Measures the actual impact of AI visibility

Why is this important?

Referred traffic is the most direct proof that AI visibility drives real user behavior. It shows whether users click on your content after seeing an AI-generated answer.

Example:

A user receives an AI-generated answer with a source and clicks the link.

→ Jackpot! Visibility turns into measurable traffic.

What this KPI does well:

Measures real impact of AI visibility

Connects AI search with traditional performance KPIs

Enables attribution of AI-driven traffic

What this KPI cannot do:

Captures only explicit citations with clickable links

Underestimates impact of implicit mentions

Dependent on tracking and referrer detection

Conclusion:

Referred traffic shows whether AI visibility translates into actual usage. This enables optimization of snippets and value propositions to increase click-through rates.

How to Use These KPIs in Practice

Beyond the core metrics, it’s also worth looking at additional KPIs that complete the overall picture and help evaluate AI visibility holistically.

Conclusion

AI search is changing not only how people search—but also how success is measured.

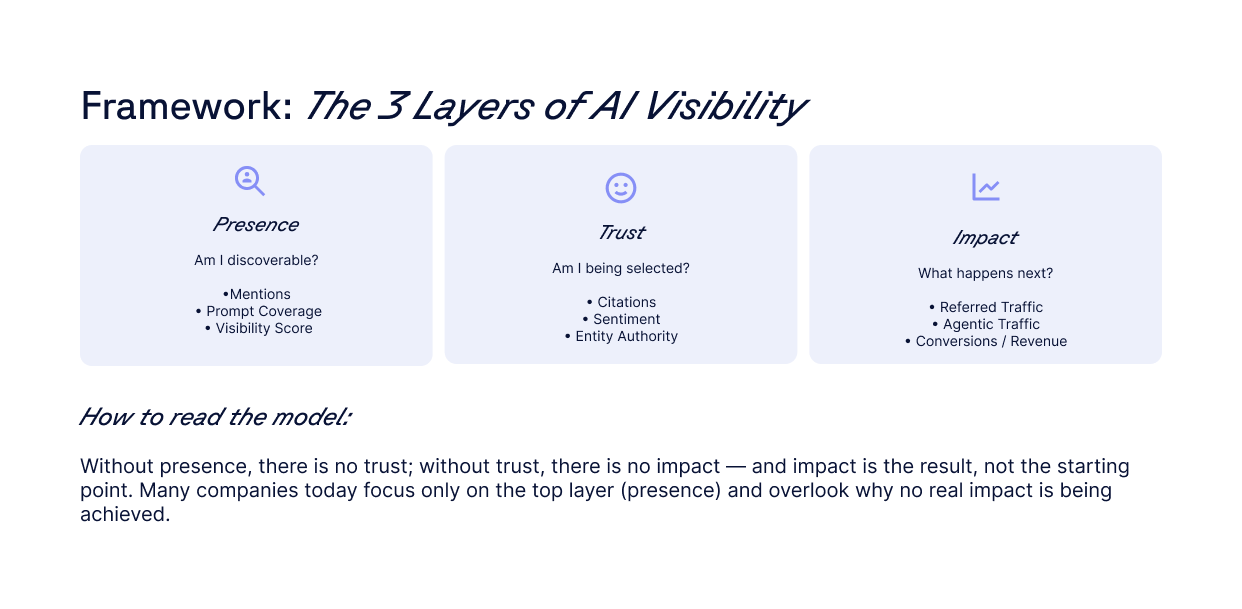

Companies need to understand three levels:

Presence (mentions, coverage)

Trust (citations, sentiment)

Impact (traffic, market share)

The key question today is:

Am I part of the answer—and if so, how visible and how positively?

Further recommendations for action on AI search can be found in our AI playbook — download it for free here.